AI Engineering

·

Apr 18, 2026

·

12 min read Wire OpenTelemetry spans for agent runs with GenAI attributes, using console export for dev and OTLP for production tracing.

Deep Dive

·

Apr 12, 2026

·

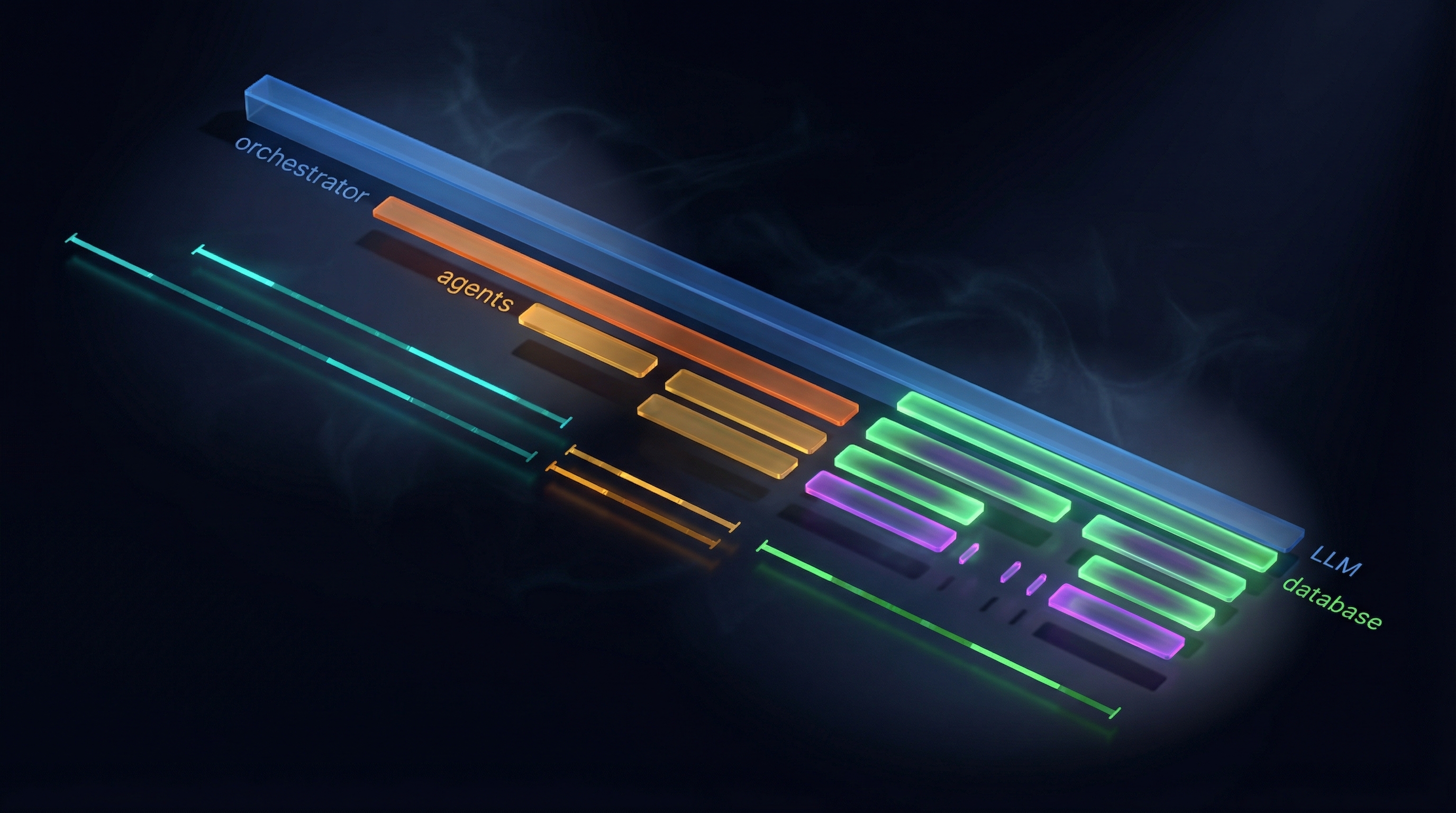

9 min read Most AI agent tutorials end at “hello world.” You build a single agent with one or two tools, it answers a few questions, and that is it. The gap between that tutorial and a production system with multiple agents, authentication, observability, and a real frontend is enormous.

Deep Dive

·

Apr 12, 2026

·

14 min read Revised with .NET examples — A newer version of this article, covering both Python and .NET, is available as part of the MAF v1: Python and .NET series: MAF v1 — 07-observability-otel.

Deep Dive

·

Dec 20, 2025

·

10 min read The infrastructure overhead in microservices projects is real. Setting up databases, wiring service discovery, getting distributed tracing working, managing connection strings across environments—this work hits before you’ve written a line of business logic. On more than one project, I’ve watched the first sprint disappear into Docker Compose files and appsettings drift.

Deep Dive

·

Dec 6, 2025

·

24 min read If you’ve ever built a microservices architecture, you know the pain points all too well: spending hours setting up PostgreSQL locally, wrestling with Redis configurations, debugging why RabbitMQ won’t connect, managing connection strings across multiple services, and let’s not even talk about implementing distributed tracing manually.