Deep Dive

·

May 25, 2025

·

9 min read In the rapidly evolving landscape of artificial intelligence, development teams face significant challenges when integrating multiple AI models into their workflows. The proliferation of different providers, APIs, and pricing models creates complexity that can slow down innovation and increase technical debt. This article explores a powerful solution: a Docker-based setup combining LiteLLM proxy with Open WebUI that streamlines AI development and provides substantial benefits for teams of all sizes.

Deep Dive

·

Apr 24, 2025

·

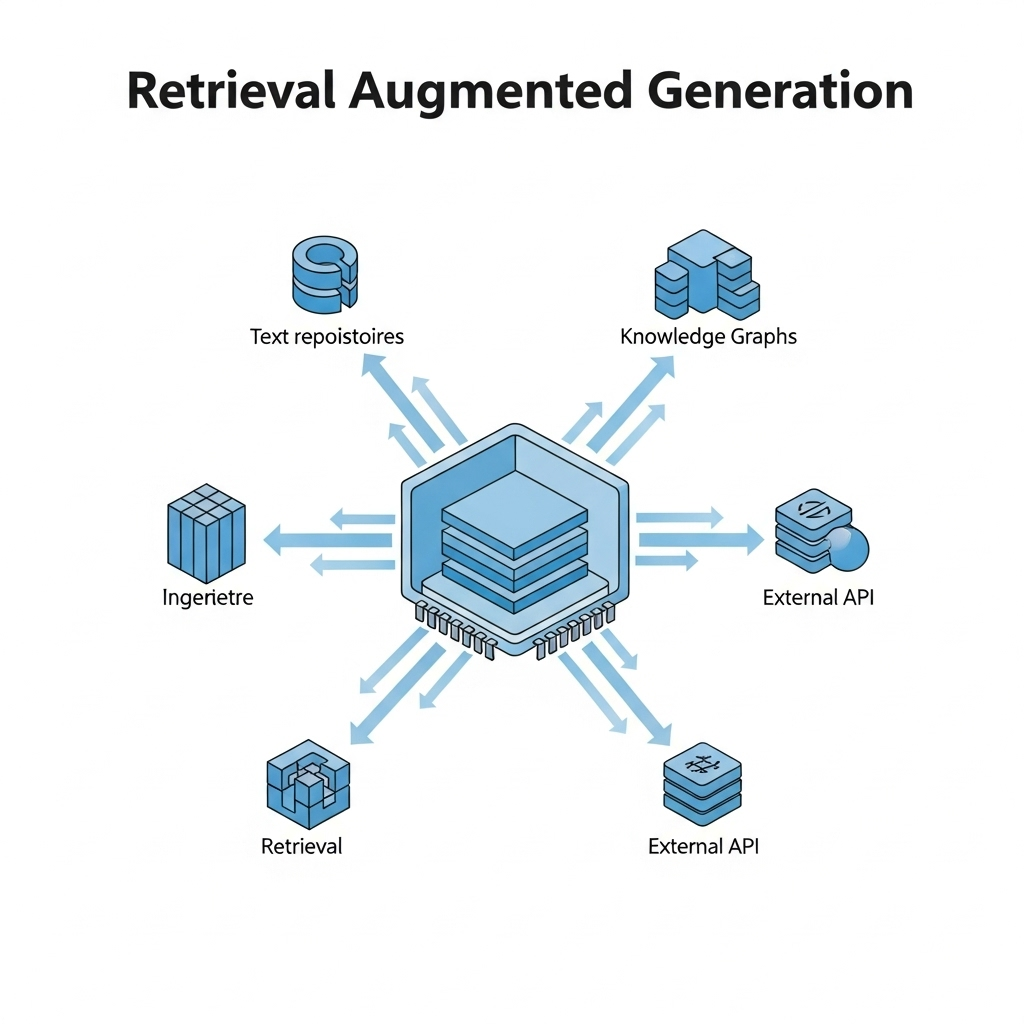

16 min read TL;DR: This guide walks you through building a production-ready RAG system using FastAPI, ChromaDB, MinIO, and OpenAI. Learn document chunking, vector embeddings, hybrid search, and real-world deployment strategies.

Introduction # As a .NET developer watching the AI landscape evolve, I found myself both excited and skeptical. When tools like Claude.ai and ChatGPT started offering out-of-the-box RAG solutions, I wanted to build my own system with full control over the implementation.

Deep Dive

·

Jan 6, 2025

·

15 min read TL;DR # This article demonstrates how to build a REST API that converts natural language into SQL queries using multiple LLM providers (OpenAI, Azure OpenAI, Claude, and Gemini). The system dynamically selects the appropriate AI service based on configuration, executes the generated SQL against a database, and returns structured results. It includes a complete implementation with a service factory pattern, Docker setup, and example usage.