Quick Takes

·

Nov 23, 2025

·

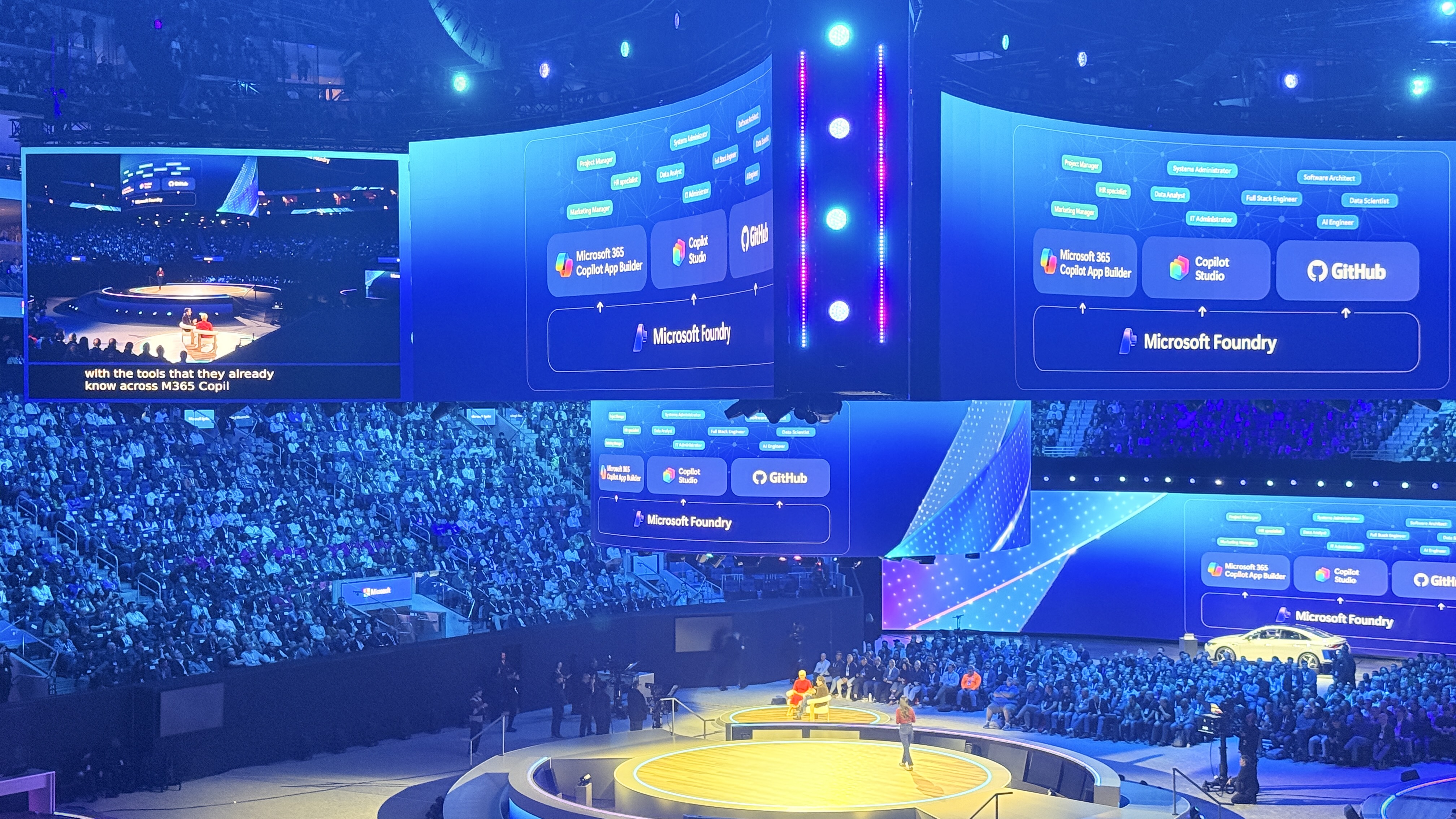

11 min read Overview # I just returned from Microsoft Ignite in San Francisco, and if you’re building AI agents for enterprise, this was the event you needed to attend.

Quick Takes

·

Oct 24, 2025

·

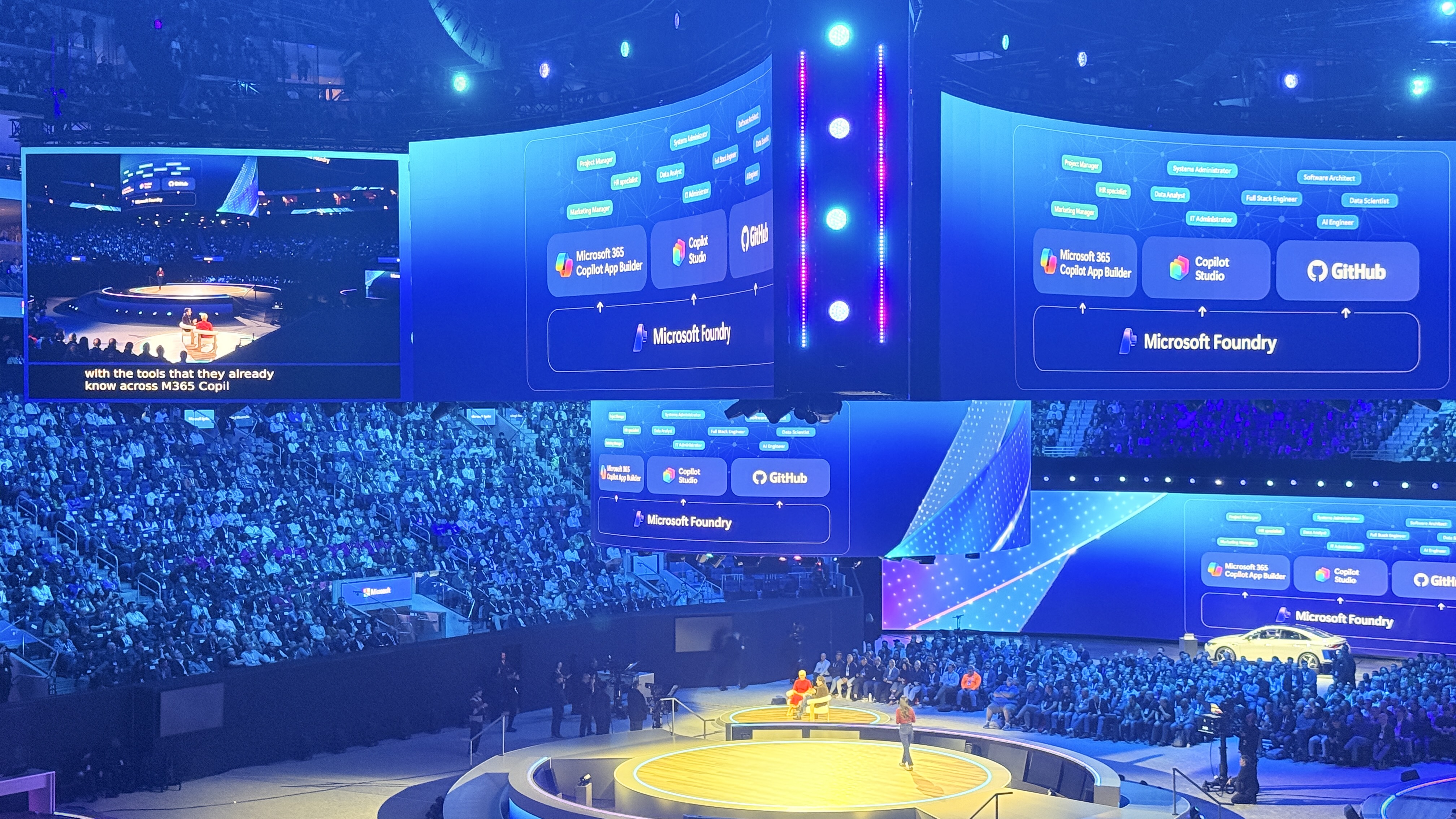

5 min read Docker has introduced comprehensive MCP (Model Context Protocol) tooling that enables organizations to build custom catalogs with complete control over AI tool access. With over 220+ containerized MCP servers available and the ability to create private catalogs, enterprises can now deploy AI tooling with appropriate security guardrails.

Deep Dive

·

May 25, 2025

·

9 min read In the rapidly evolving landscape of artificial intelligence, development teams face significant challenges when integrating multiple AI models into their workflows. The proliferation of different providers, APIs, and pricing models creates complexity that can slow down innovation and increase technical debt. This article explores a powerful solution: a Docker-based setup combining LiteLLM proxy with Open WebUI that streamlines AI development and provides substantial benefits for teams of all sizes.

Deep Dive

·

May 24, 2025

·

15 min read In today’s fast-paced development landscape, AI coding assistants have become indispensable tools for developers seeking to maintain high-quality code while meeting demanding deadlines. GitHub Copilot stands at the forefront of this revolution, offering intelligent code suggestions that can significantly accelerate development. However, the true power of Copilot lies not just in its base capabilities, but in how effectively it can be customized to align with your specific project standards and best practices.

Deep Dive

·

Apr 24, 2025

·

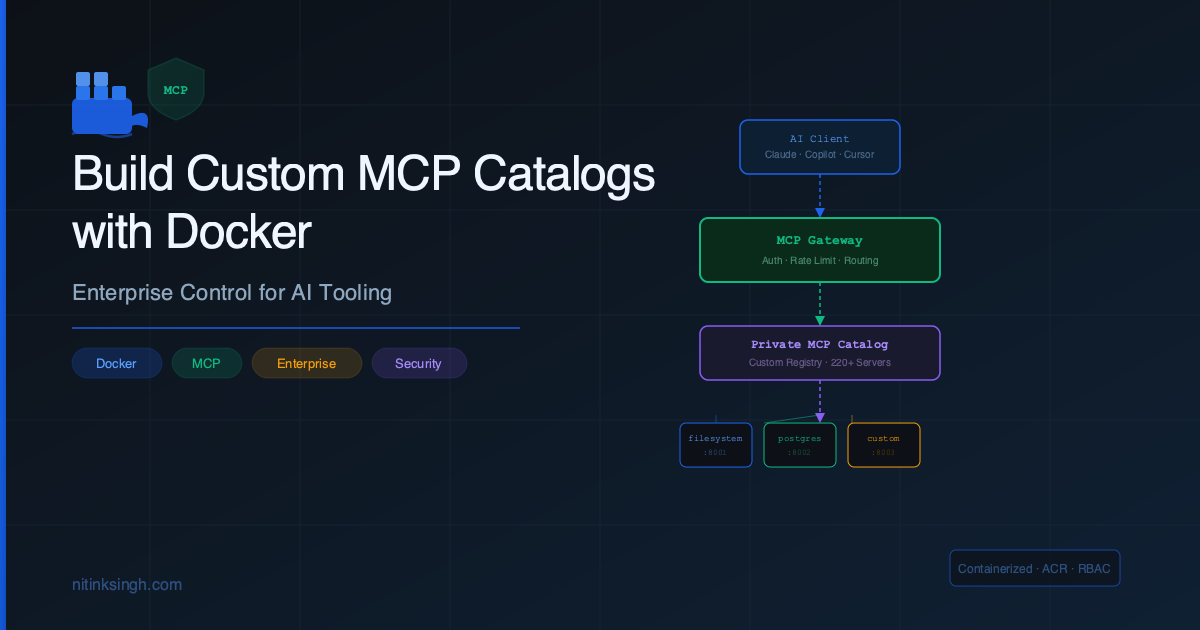

16 min read TL;DR: This guide walks you through building a production-ready RAG system using FastAPI, ChromaDB, MinIO, and OpenAI. Learn document chunking, vector embeddings, hybrid search, and real-world deployment strategies.

Introduction # As a .NET developer watching the AI landscape evolve, I found myself both excited and skeptical. When tools like Claude.ai and ChatGPT started offering out-of-the-box RAG solutions, I wanted to build my own system with full control over the implementation.

Deep Dive

·

Jan 11, 2025

·

9 min read Introduction # Large Language Models (LLMs) have become a cornerstone of modern AI applications, from chatbots that provide customer support to content generation tools for images and videos. They power virtual assistants, automated translation systems, and personalized recommendation engines, showcasing their versatility across industries. However, running these models on a local machine has traditionally been a complex and resource-intensive task, requiring significant configuration and technical expertise. This complexity often deters beginners and even intermediate users who are eager to explore the capabilities of LLMs in a private, local environment.

Deep Dive

·

Jan 6, 2025

·

15 min read TL;DR # This article demonstrates how to build a REST API that converts natural language into SQL queries using multiple LLM providers (OpenAI, Azure OpenAI, Claude, and Gemini). The system dynamically selects the appropriate AI service based on configuration, executes the generated SQL against a database, and returns structured results. It includes a complete implementation with a service factory pattern, Docker setup, and example usage.

Deep Dive

·

Dec 7, 2024

·

11 min read Introduction # Artificial Intelligence is transforming how we build applications, particularly in creating natural, conversational user experiences. This article guides you through building a full-stack AI chat application using .NET on the backend, Angular for the frontend, and Azure OpenAI for powerful language model capabilities, all connected through real-time SignalR communication.